Information

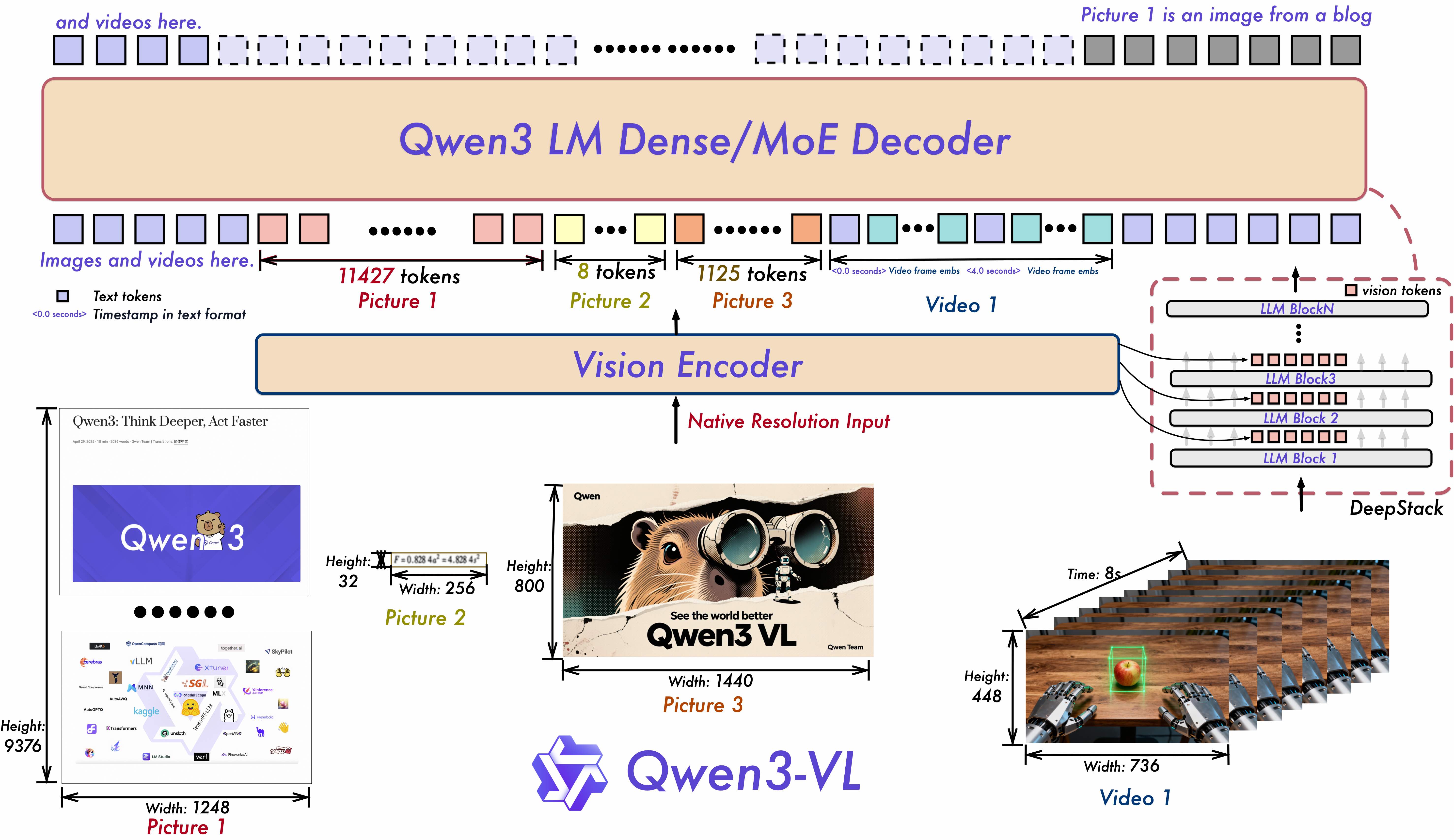

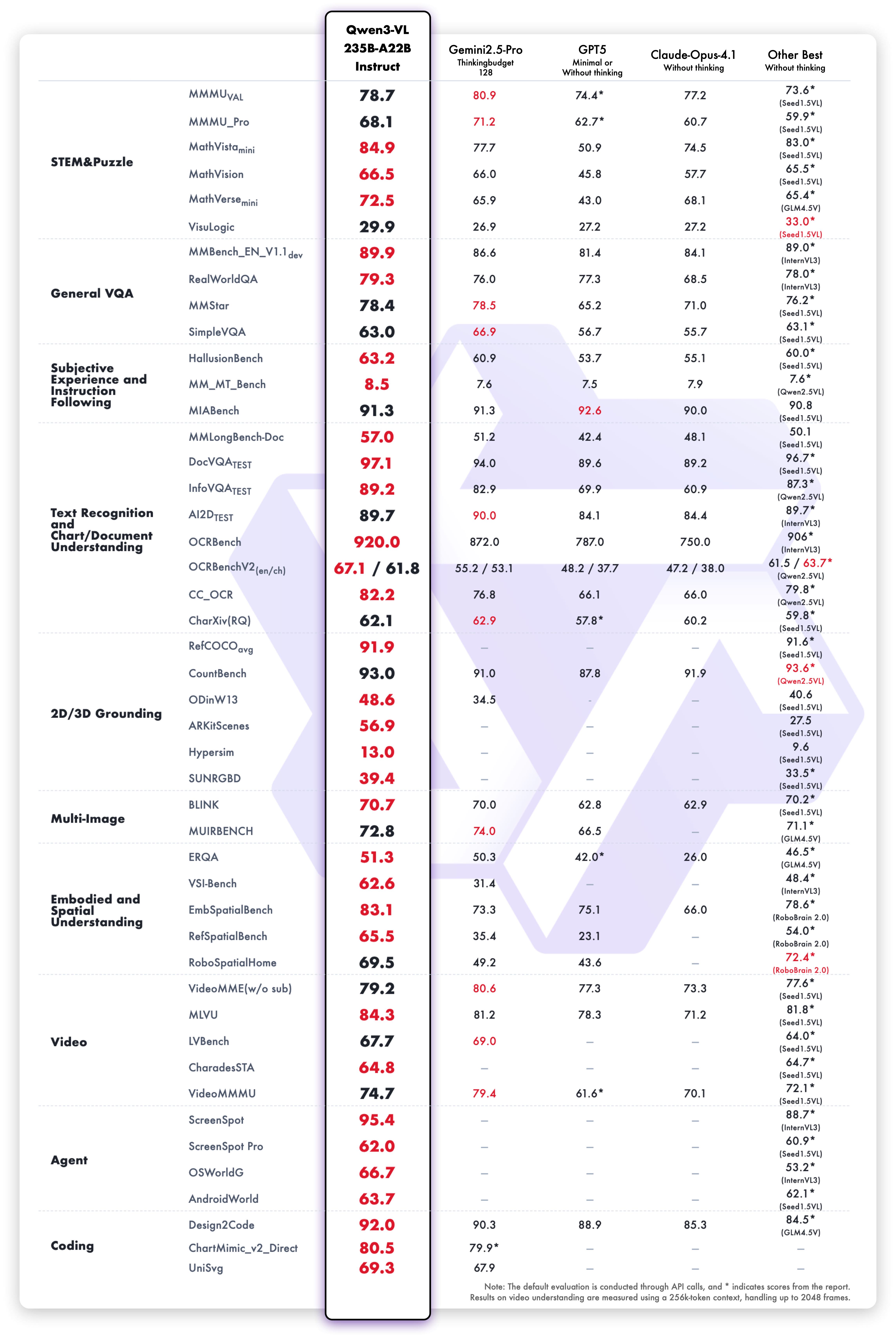

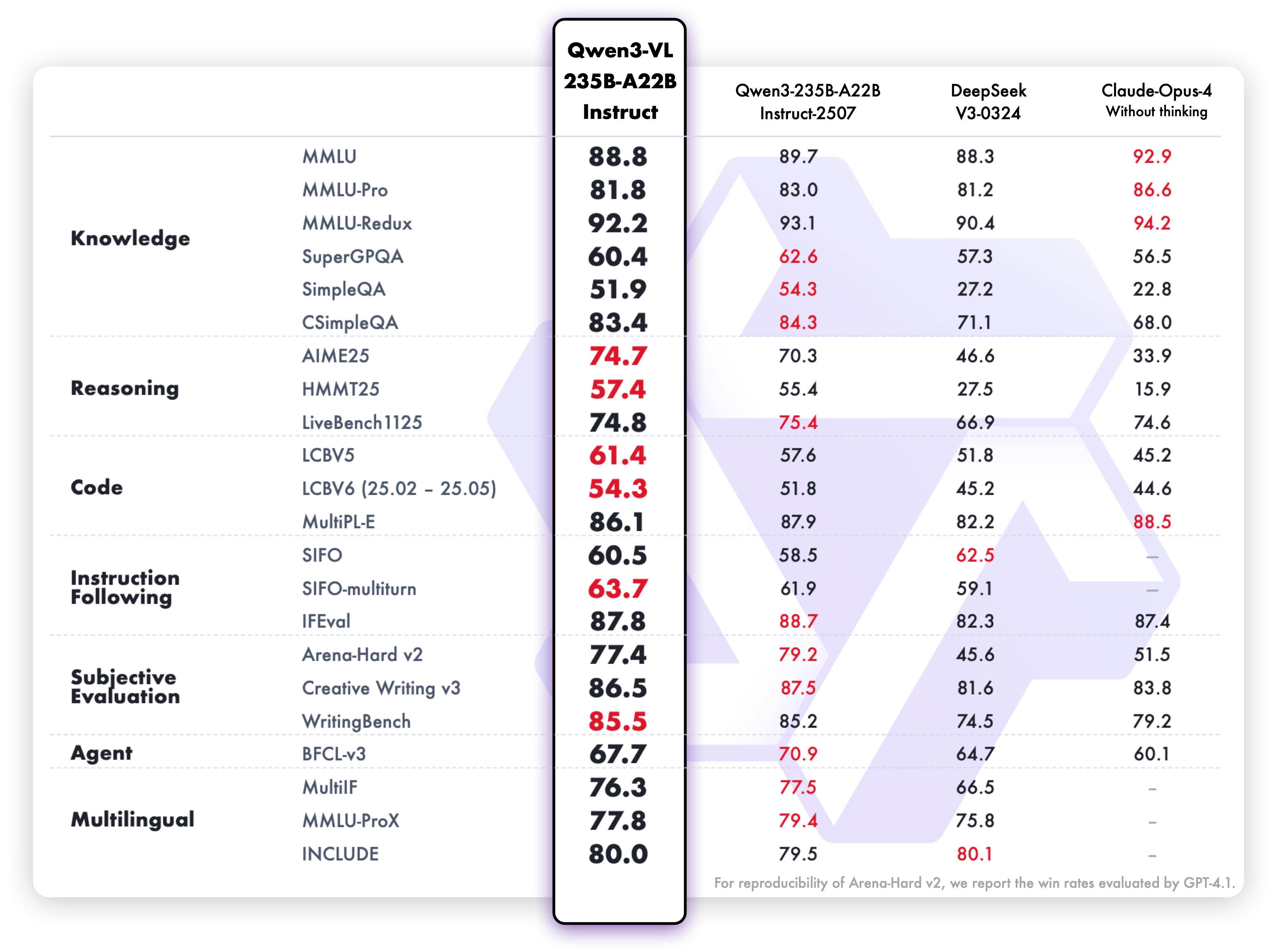

1. **Interleaved-MRoPE**: Full‑frequency allocation over time, width, and height via robust positional embeddings, enhancing long‑horizon video reasoning. 2. **DeepStack**: Fuses multi‑level ViT features to capture fine‑grained details and sharpen image–text alignment. 3. **Text–Timestamp Alignment:** Moves beyond T‑RoPE to precise, timestamp‑grounded event localization for stronger video temporal modeling. This is the weight repository for Qwen3-VL-235B-A22B-Instruct. --- ## Model Performance **Multimodal performance**  **Pure text performance**  ## Quickstart Below, we provide simple examples to show how to use Qwen3-VL with ModelScope and Transformers. The code of Qwen3-VL has been in the latest Hugging Face transformers and we advise you to build from source with command: \`\`\` pip install git+https://github.com/huggingface/transformers # pip install transformers==4.57.0 # currently, V4.57.0 is not released \`\`\` ### Using Transformers to Chat Here we show a code snippet to show how to use the chat model with \`transformers\`: \`\`\`python from transformers import Qwen3VLMoeForConditionalGeneration, AutoProcessor # default: Load the model on the available device(s) model = Qwen3VLMoeForConditionalGeneration.from_pretrained( "Qwen/Qwen3-VL-235B-A22B-Instruct", dtype="auto", device_map="auto" ) # We recommend enabling flash_attention_2 for better acceleration and memory saving, especially in multi-image and video scenarios. # model = Qwen3VLMoeForConditionalGeneration.from_pretrained( # "Qwen/Qwen3-VL-235B-A22B-Instruct", # dtype=torch.bfloat16, # attn_implementation="flash_attention_2", # device_map="auto", # ) processor = AutoProcessor.from_pretrained("Qwen/Qwen3-VL-235B-A22B-Instruct") messages = [ \{ "role": "user", "content": [ \{ "type": "image", "image": "https://qianwen-res.oss-cn-beijing.aliyuncs.com/Qwen-VL/assets/demo.jpeg", \}, \{"type": "text", "text": "Describe this image."\}, ], \} ] # Preparation for inference inputs = processor.apply_chat_template( messages, tokenize=True, add_generation_prompt=True, return_dict=True, return_tensors="pt" ) # Inference: Generation of the output generated_ids = model.generate(**inputs, max_new_tokens=128) generated_ids_trimmed = [ out_ids[len(in_ids) :] for in_ids, out_ids in zip(inputs.input_ids, generated_ids) ] output_text = processor.batch_decode( generated_ids_trimmed, skip_special_tokens=True, clean_up_tokenization_spaces=False ) print(output_text) \`\`\` ## Citation If you find our work helpful, feel free to give us a cite. \`\`\` @misc\{qwen2.5-VL, title = \{Qwen2.5-VL\}, url = \{https://qwenlm.github.io/blog/qwen2.5-vl/\}, author = \{Qwen Team\}, month = \{January\}, year = \{2025\} \} @article\{Qwen2VL, title=\{Qwen2-VL: Enhancing Vision-Language Model's Perception of the World at Any Resolution\}, author=\{Wang, Peng and Bai, Shuai and Tan, Sinan and Wang, Shijie and Fan, Zhihao and Bai, Jinze and Chen, Keqin and Liu, Xuejing and Wang, Jialin and Ge, Wenbin and Fan, Yang and Dang, Kai and Du, Mengfei and Ren, Xuancheng and Men, Rui and Liu, Dayiheng and Zhou, Chang and Zhou, Jingren and Lin, Junyang\}, journal=\{arXiv preprint arXiv:2409.12191\}, year=\{2024\} \} @article\{Qwen-VL, title=\{Qwen-VL: A Versatile Vision-Language Model for Understanding, Localization, Text Reading, and Beyond\}, author=\{Bai, Jinze and Bai, Shuai and Yang, Shusheng and Wang, Shijie and Tan, Sinan and Wang, Peng and Lin, Junyang and Zhou, Chang and Zhou, Jingren\}, journal=\{arXiv preprint arXiv:2308.12966\}, year=\{2023\} \} \`\`\`